This document describes the details of our setup for live paper sessions at ICCP 2020.

Session Format

We modeled our sessions to be as close to a regular physical session as possible: featuring multiple papers and live Q&A moderated by a session chair. We believed grouping papers would structure the schedule and increase visibility for papers, and that live Q&A was critical in making the virtual conference feel like a conference. We also chose to have Q&A and presentations in the same live-stream rather than asking attendees to view pre-recorded talks offline and only have the Q&A portion be live. We felt fewer attendees may join a live session only to ask questions, but were more likely to ask questions if they were already there for the talks.

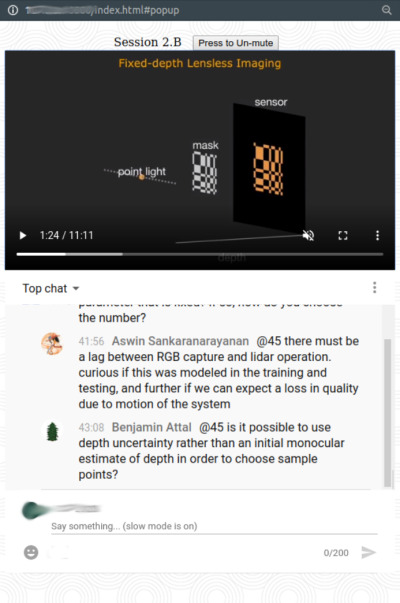

Each session was an hour long with 12 minutes of presentation followed by 3 minutes of live Q&A for each paper. Authors submitted recorded videos of their talks in advance. A session chair and one author per-paper joined in a video meeting during the session for live Q&A. Attendees asked their questions by live chat, and the session chair selected and asked these questions to the authors in the video meeting. An example of a live session can be seen here.

Recordings of the live sessions were available immediately after the session ended—for people who were not able to view the session live (e.g., because of being in a different timezone). Discussions could then continue asynchronously in the comments section (or in the conference slack).

Live Streams

Our setup relied largely on existing tools and platforms. The sessions were streamed live on YouTube, and we used its live-chat for viewer interaction. Authors and session-chairs joined a Zoom meeting hosted by the "stream host" (in our case, the program chairs). The host used OBS Studio to stream to YouTube, manually switching the stream back and forth between playing pre-recorded videos and screen-casting the Zoom window. The host also provided cues to the session chair and authors on when they were going "on-air" for the Q&A portions of the session.

We setup multiple "scenes" in OBS studio, each a collection of video and audio sources. Specifically, we had one scene for each of the pre-recorded videos, one that captured the Zoom window with an overlay on top, and static intro and outro scenes. The stream host manually switched between them during the session, co-ordinating with the session chair: switching to the next video once the session chair finished introducing it, and after a video finished, checking with the session chair if they were ready, switching to the Zoom scene, and then signalling the session chair to start speaking.

Author & Session Chair View

During the session, we asked all authors to keep their camera and mic turned off except during their own paper's Q&A. Since there is always a lag (on average 10-20 seconds) between the actual session and the live-stream on YouTube, it would be disorienting for session chairs and authors to view the stream video on YouTube while participating in the session. We wanted them to be able to view the live-chat, since this was their means of receiving questions from and interacting with attendees. We also wanted them to be able to view each others' paper videos (rather than spend 48 minutes out of 60 in contemplative silence).

So, we set up an alternate web-interface which showed an embedded version of the YouTube live-chat, and also played the pre-recorded videos from a static web location. This interface was 'remote controlled' by the stream host. Essentially, after switching to a different "scene" in OBS, the host would set the "state" of the session: to "In Chat" or one of the videos. The web-interface would poll the value of this state, and would update what it was showing accordingly. If the state was "In Chat", it would only show the embedded live-chat. If it was set to one of the videos, it would play the video in addition to showing the chat. We used AWS for this interface: the state was stored on DynamoDB, we used Lambda and APIGateway to poll and set this state. The web-interface with static videos was hosted off S3.

| When video playing | During Q&A |

|  |

We also used this session state (which included the current session id) in the main website: to redirect the "Tune-in Live" button to the right session, and to automatically show videos of all completed sessions.

Costs

The only paid components of our setup were:

- Zoom

- A licensed Zoom account was available through the university, but in general would cost ~$15 (multiplied by number of parallel tracks for multi-track conferences).

- AWS

- We used AWS for the web-interface (which had negligible costs), and to handle uploads of pre-recorded videos by authors. This came to less than $5 total (mainly bandwidth costs for the uploads).

Keeping our expenses low allowed us to offer complimentary registration to all attendees.

Thoughts

Given more time and in hindsight, there are a couple of things we think we could have done differently for our paper sessions:

- Persistent chat: One of the issues we faced was that the YouTube live-chat only stayed live during the session. Once the stream ended, a "replay" of the live-chat would be available. But further discussion would have to continue elsewhere. We asked everyone to post further discussions in the comments section, but this almost rarely happened: indeed, people preferred instead to message authors on Slack. This ended up fragmenting our discussion. In fact, after the first day, we began keeping the stream running (leaving it on the outro scene) to allow follow-ups over live-chat to questions that authors couldn't answer in the 3 minute Q&A.

We had actually considered an alternative 'persistent' chat using Gitter (because it is free and embeddable, but there are likely other options.) We considered having our own view pages, that embedded a view of just the video of the live-stream from YouTube, with a panel on the side for the Gitter chat room for that session. The benefit of this would have been that the same chat room, as used during the live-session, would have persisted after the stream ended. We did not go with this option mainly because of insufficient time to test and evaluate. The YouTube live-chat was a safer bet because we knew it was an existing standard interface, that would for example work on different devices and so on. But this is an option definitely worth considering. - Second text-only Q&A session: One of the things we struggled with was the disparate timezones. While videos remained available to view for folks that couldn't attend the sesison live due to the time difference, they had a less interactive experience in not being able to take part in live Q&A. Part of this might have been ameliorated with a persistent chat room (as mentioned above). But an additional option would be to also have a second block of time on the schedule for text-based Q&A for each session: when authors and attendees would join the chat room to discuss the papers in the session (attendees would be asked to view the session recording prior to joining). However, given that authors and attendees could be in different time-zones, it's less clear whether a second sceduled time would've helped, compared to just asynchronous chat (in a persistent room).